AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Back to Blog

Shannon entropy2/20/2023

The average transmission rate of 5.7 is obtained by taking a weighted average of the lengths of each character code and dividing the channel speed by this average length. On the other hand, transmitting a long series of Ds would result in a transmission rate of only 3.3 characters from M per second because each D must be represented by 3 characters from S. Because each A is represented by just a single character from S, this would result in the maximum transmission rate, for the given channel capacity, of 10 characters per second from M. But suppose that, instead of the distribution of characters shown in the table, a long series of As were transmitted. Recall that the table Comparison of two encodings from M to S showed that the second encoding scheme would transmit an average of 5.7 characters from M per second. Shannon’s concept of entropy can now be taken up. SpaceNext50 Britannica presents SpaceNext50, From the race to the Moon to space stewardship, we explore a wide range of subjects that feed our curiosity about space!.

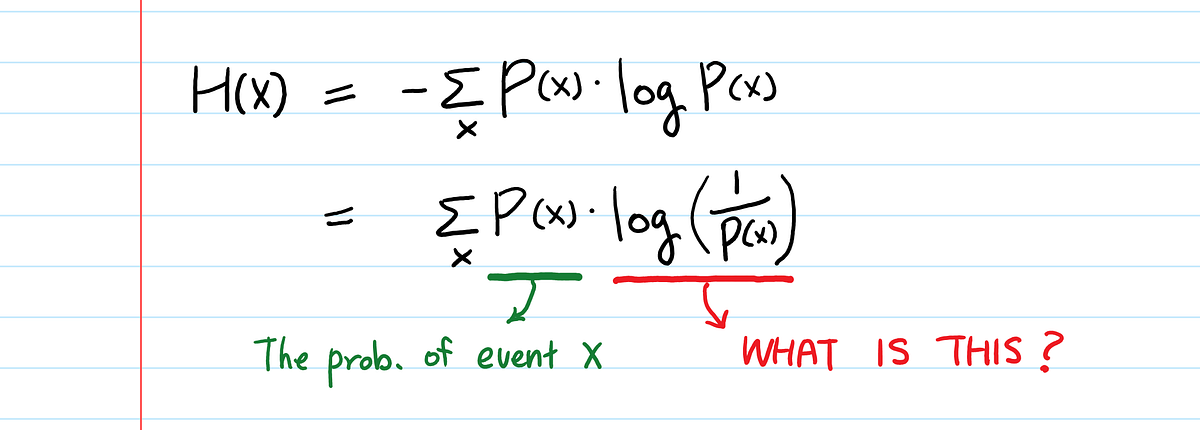

Learn about the major environmental problems facing our planet and what can be done about them! Saving Earth Britannica Presents Earth’s To-Do List for the 21st Century.Britannica Beyond We’ve created a new place where questions are at the center of learning.100 Women Britannica celebrates the centennial of the Nineteenth Amendment, highlighting suffragists and history-making politicians.COVID-19 Portal While this global health crisis continues to evolve, it can be useful to look to past pandemics to better understand how to respond today.Student Portal Britannica is the ultimate student resource for key school subjects like history, government, literature, and more.This Time in History In these videos, find out what happened this month (or any month!) in history.#WTFact Videos In #WTFact Britannica shares some of the most bizarre facts we can find.Demystified Videos In Demystified, Britannica has all the answers to your burning questions.Britannica Explains In these videos, Britannica explains a variety of topics and answers frequently asked questions.Britannica Classics Check out these retro videos from Encyclopedia Britannica’s archives.Just increment the count of a characters in the text and accumulate a count of the characters present - no need to even specify an alphabet. Your first loops, there is no need for the inner loop on alphabet. Is there any way to do it all with minimal looping? You are doing a large amount of looping over the text, and the alphabet, then the frequencies, then the probabilities, etc. Public static int findFrequencies(String text)Ĭhar ALPHABET = Public static String convertTextToString() this method (below) is written specifically for texts without Public static String removeUnnecessar圜hars() Here is my program: public class ShannonEntropy Regularly, a recent paper that you wrote, and the E. Run your program on a web page that you read Of a file as a command-line argument and prints the entropy of the Said to measure the information content of a string: if each characterĪppears the same number times, the entropy is at its minimum valueĪmong strings of a given length. Over all characters that appear in the string. String, and the entropy is defined to be the sum of the quantity -p(c)*log2(p(c)), Of the probability that c would be in the string if it were a random The quantity p(c) = f(c)/n is an estimate Given a string of n characters, let f(c) be the frequency String and plays a cornerstone role in information theory and dataĬompression. The Shannon entropy measures the information content of an input from the book Computer Science An Interdisciplinary Approach by Sedgewick & Wayne:

0 Comments

Read More

Leave a Reply. |

RSS Feed

RSS Feed